ChatGPT As A Security and Compliance Assistant

How to use ChatGPT to extract and organize information from compliance documents

Imagine that you have a 300 page pdf, containing 100 security rules. Each rule has a number, name, resolution steps, plus additional information such as description and references. You want to codify these controls in order to automate security (for example as OPA policies). What do you do?

Recently I spoke with a company that was going through this process, codifying the CIS GCP Benchmarks into python scripts. I know of at least two other companies that went through something similar recently, besides software vendors such as Palo Alto, Azure, Prowler, Mondoo, Turbot, CIS Audit, and many others having to go through this process to generate some type of code based on pdf controls.

It is not an insurmountable problem, but the process of going back and forth copying and pasting from the pdf is a time sink with little value add. Compound that with the fact that these benchmarks are updated about every 2-3 years, and that most companies will have to track multiple regulatory controls, and suddenly you have a group of people that are stuck in a continuous loop doing these menial tasks. Could we automate this process?

This blog post is the first of a series, where I will document initial explorations I have done using ChatGPT to achieve some level of automation. In this first post I will describe manual steps that should benefit those that are not comfortable with code, but mention how these steps could be improved. In the next post I will go over how to train or fine tune a ML model to extract information from CIS benchmark documents.

There were many security controls we could use for this exercise, like PCI DSS, FedRamp and others. I picked CIS GCP Benchmarks because it is something I was using recently, but the overall idea should apply to any situation requiring extracting values from semi-structured documents.

Before we start, let’s go over some background on CIS.

CIS

From Wikipedia:

The Center for Internet Security (CIS) is a 501(c)(3) nonprofit organization, formed in October, 2000. Its mission is to make the connected world a safer place by developing, validating, and promoting timely best practice solutions that help people, businesses, and governments protect themselves against pervasive cyber threats. The organization (..) has members including large corporations, government agencies, and academic institutions.

CIS publishes the Critical Security Controls, and the CIS Benchmarks.

The CIS Critical Security Controls and CIS Benchmarks are two different security standards that have some overlapping aspects, but serve different purposes. The Critical Security Controls provide general best practices for overall security, while the Benchmarks provide specific recommendations for secure configuration of individual systems and applications. Some of the Critical Security Controls can be mapped to specific Benchmarks, such as Control 1 to Configuration Management and Control 5 to Database Security, but not all have direct mappings as they are more general in nature.

For this blog series we will focus on the Google Cloud Computing Platform Benchmarks (free, requires email registration). Let’s check this document and the proposed challenge next.

CIS GCP Benchmark

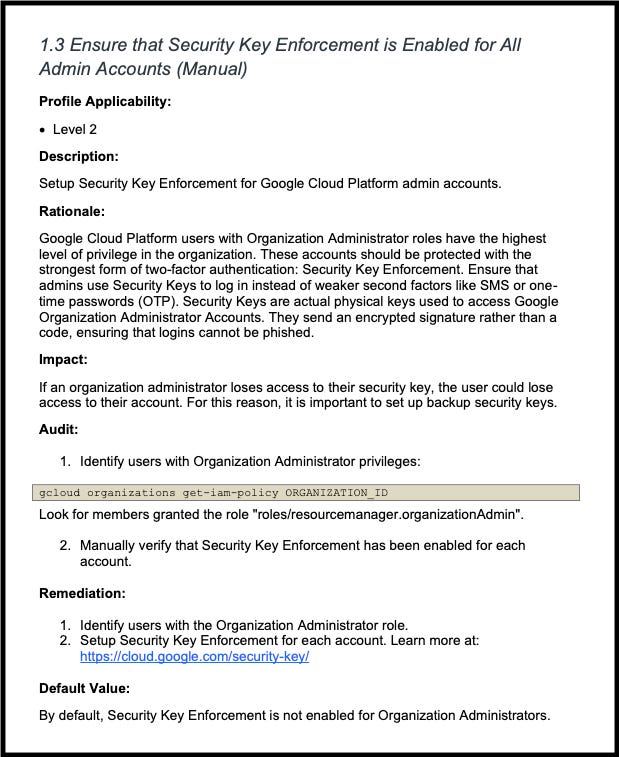

The CIS GCP Benchmark is a semi-structured document, where each rule has the format:

<Number> <Title>

<Additional info>

<Audit>

<Additional info2>

Here is an example page:

The same format is used across all 100+ CIS benchmarks, with minor variations. The proposed challenge is the following:

Extract a list of all rule names and numbers

Extract the content of the “Audit” block, and have it associated with the rule

Generate stubs of python code for each rule, referencing the name and number

If possible, generate some starter code based on the content of “Audit”

This is not a new problem, and some solutions have been proposed and made public:

Existing Solutions

AWS’s CIS Benchmark pdf parser

A great example of using regex, and in theory it could help us with our goal, but if you check the regex formulas, there are slight variations depending on the pdf. It highlights that regex is a powerful tool, but potentially brittle and hard to debug.

None of these examples worked for the GCP Benchmarks and even though I invested some hours trying to come up with the magical incantation, I still believe there should be a more robust approach than regex. It did help me start identifying some patterns in the rules, such as that the name always ends with (Manual) or (Automated).

Jamf’s CIS Mac Benchmarks

Mischa van der Bent from Jamf is one of the leading advocates for automation of the macOS Security Compliance Project, and he mentions automating pdf rule extraction in this repository, although the file he refers to for the extraction, generate-baseline.py is in another repository. There is also a third repository with the rules.

At the end of the day it is an impressive project, but they combine rule extraction with monitoring and generating reports, using both python and bash and leveraging macOS native functionality. It is a great source for inspiration, but it can become a rabbit hole and wasn’t generic enough for the proposed use case.

Rodrigues Jorgex Python Code

I found this blog post recently, and it made me happy to see I am not the only one frustrated with the task of codifying the controls. In his Medium post, Rodrigues goes over the steps of creating python code to extract the controls as a dictionary.

It is a valid approach, but once again it leverages regex which we have seen is brittle when applying to different versions or different benchmarks.

Having evaluated a few alternative solutions, let’s see how we could leverage ChatGPT for this task.

ChatGPT - Manual Steps

Here we will go over a few steps that still require some back and forth, but serve to give us an understanding of ChatGPT capabilities.

Get a list of all Benchmark rules

Open the CIS GCP Benchmark pdf, go to the 3rd page and manually copy the index information. Due to ChatGPT token limitations, you will need to perform this step a few times.

Go to ChatGPT. Give it a few examples, ask it to format the information as a table and paste the initial portion of the text.

Here is the prompt I used:

Given the following examples: 1 Identity and Access Management.....................................................................................14 1.1 Ensure that Corporate Login Credentials are Used (Manual) ...............................................................15 1.2 Ensure that Multi-Factor Authentication is 'Enabled' for All Non-Service Accounts (Manual)................17 describing three chapters, where: for the first one, CHAPTER_NUMBER is 1, CHAPTER_NAME is Identity and Access Management for the second one, CHAPTER_NUMBER is 1.1, CHAPTER_NAME is Ensure that Corporate Login Credentials are Used (Manual) for the third one, CHAPTER_NUMBER is 1.2, CHAPTER_NAME is Ensure that Multi-Factor Authentication is 'Enabled' for All Non-Service Accounts (Manual) Provide a table with two columns, CHAPTER_NUMBER and CHAPTER_NAME for the following: <...pasted text>

ChatGPT will output the following:

You can copy this html table and paste it to Excel (I tried Google sheets but apparently it only supports importing external html tables). Excel will fill the cells correctly, and once you have performed this operation for the entire index you can download the file in csv format.

Not the most automated, but much easier than going back and forth to copy and paste each rule, and it didnt require a regex. 😊 In the last section of this blog post I will introduce a more automated approach with python.

Going back to our original challenge, we have completed the first task. Task number 2, extracting the content of “Audit” is a bit tricky, so we will put this on hold for now and work on step 3, generating the python stubs.

Automate python stub creation

Having the csv file and some basic python knowledge should make this a simple task. But let’s assume you have no coding experience or that your python chops are a bit rusty. Could ChatGPT help us with some boilerplate python code?

O achieve the desired output I used a sequence of queries:

using python, how to create one empty file for each value in the first colum and each row, in a csv file

<generated code>

next,

how to modify the code to skip the first row

<updated code>

then

how to generate the files inside a subfolder called "constraints"

<updated code>

and finally

using the same code, can you add a comment at the top of the file, using the information from the first and second column of each row?

The resulting code can be found here. Given a csv file with two columns, it will generate a new folder and create a python file for each row, with a comment in each file referencing the rule number and name. Not rocket science but kinda cool with almost zero effort 😊 .

Now let’s see what can be done with requirement 2 and 4 of the original challenge.

Generating python code for each rule

Unfortunately I wasn’t able to automate the extraction of the “Audit” blocks without using regex. Even the regex was not that simple because at the end of some rules there is text referring to the CIS controls that follow a similar pattern to rule names.

Putting this on hold for a while, we can check - is the information from the “Audit” block sufficient for ChatGPT to generate python code using the GCP Python library?

Unfortunately after a few tests by copying and pasting the audit block to ChatGPT with instructions such as

use google cloud python library to create python code to address the requirement:

For each Google Cloud Platform project, folder, or organization: 1. Identifynon-serviceaccounts. 2. Verify that multi-factor authentication for each account is set. 3. Return a list of any accounts that do not have multi-factor enabled

Presented underwhelming results. Perhaps leveraging CodexGCP would yield better results, but we are still dependent on the text of the Audit block, and either way at least for the GCP benchmarks not every control has straight forward CLI commands or specific instructions. So steps 3 and 4 are no-go.

As we have seen, the ChatGCP browser UI is great for testing what is possible with the tool, but has no configuration options and is constrained by token limits.

On the next section we go over OpenAI API which addresses some of these shortcomings. My original intention was to leverage this API to execute the same instructions in an automated way, however at the end of the day because of the token limitations I ended up having to use a regex approach 😥.

Let’s check out what is available on OpenAI API.

OpenAI API

The API offers more flexibility that the UI, and although it is paid you get a $18 credit for the first 90 days.

To explore the API you will have to login to the platform and create an API key associated with your account.

Once you have the API key you can use the python library to call it such as

def GPT_Completion(texts):

openai.api_key = "API KEY"

response = openai.Completion.create(

engine="text-davinci-002",

prompt = texts,

temperature = 0.6,

top_p = 1,

max_tokens = 3000,

frequency_penalty = 0,

presence_penalty = 0

)

return(response.choices[0].text)

You can find detailed information about the different models and available parameters in the documentation.

The biggest challenge I faced when using the API was the token limits. From the docs:

Depending on the model used, requests can use up to 4097 tokens shared between prompt and completion. If your prompt is 4000 tokens, your completion can be 97 tokens at most.

The limit is currently a technical limitation, but there are often creative ways to solve problems within the limit, e.g. condensing your prompt, breaking the text into smaller pieces, etc.

This means that sending the entire pdf content and leveraging the original query with examples wouldn’t work, because it goes over the token limit.

My next attempt was to extract just the 4 initial index pages, since for the initial challenge that’s the only content necessary.

However this still resulted in too many tokens. The next step was to clean it up a bit, removing the multiple unnecessary dots.

This kept on going, until I realized I had created a complete regex that was extracting the necessary information. In all honesty I tried to leverage ChatGPT to generate the regex, but there was always a problem with the generated code. At the end of the day I just created the regex by hand using text samples and regexr.com, it wasn’t that complicated.

In conclusion, I wasn’t able to avoid using regex, however trying to reduce the tokens for ChatGPT resulted in sequential steps with modular regexes, which are more flexible and robust than a single exact formula.

The current version works for GCP and AWS benchmarks, and about 95% of the Kubernetes benchmarks.

The code can be found in this Git repository.

Next steps

This was an interesting exploration and it showed me that ChatGPT can be used as an assistant for small tasks, such as reformatting text snippets or generating a few paragraphs, but for more robust outcomes either the paid version or a different approach should be considered.

The code from the Diplomat repository can still be improved - I will happily accept PRs to make it support other Benchmarks and to potentially extract additional sections from the pdf.

However that will mostly be an exercise in regex tuning, and I still believe there is potential to use AI/ML to address the use case described at the start.

In the next blog post I will document the steps taken to fine tune NanoGPT to recognize CIS Benchmark patterns. I believe there will be some manual effort required for preparation, but hopefully it will result in a more robust solution that the python regex in Diplomat. More to come!

How about you, have you used ChatGPT for similar tasks? How about alternate solutions like Google’s DialogFlow, Named Entity Recognition or Tesseract’s OCR? Comment below!