Book Review: Rational Cybersecurity for Business

Author Dan Blum brings real-world insights from his consulting experience, and shares a framework for implementing a successful security and risk transformation strategy.

How create a security culture, clarify roles and improve communication? How to tailor a control baseline based on the organization’s maturity level? How to plan for cyber resilience?

These questions and many others are addressed in Dan Blum’s book, based on his 30+ years of experience in security consulting. In addition to stories from the field, best practices and specific recommendations, he offers a template for how to create a Cybersecurity success plan covering six focus areas:

Security governance and culture

Risk management

Control baseline

IT and security simplification

Access control

Cyber resilience

The book is open source (Creative Commons) and available as a pdf/epub or hardcover through Amazon. Please support the author by buying the book if you are in a corporate setting!

In this blog post I will provide a brief summary of the chapters, and a few comments based on my experience in the field.

Chapter 1: Executive Summary

The book starts chapter 1 with an executive summary, dispelling myths and defining “Rational Cybersecurity” as

“An explicitly-defined security program based on the risks, culture and capabilities of an organization that is endorsed by executives and aligned with its mission, stakeholders, and processes.”

From this definition, the author expands the broader security context, how to determine priorities, and a very relevant maturity model to help organizations decide how to start the security journey:

With this initial overview concluded, he starts discussions around governance and culture.

Chapters 2-4: Security governance and culture

In these chapters, the author goes over roles and responsibilities, a suggested RACI (Responsible, Accountable, Consulted, Informed) matrix, board oversight and how to address initial blockers.

The discussion about poor coordination as the source of initial blockers is very relevant in my opinion, and highlights the importance of ensuring buy-in from key stakeholders to allow for the “rational” aspect of the cybersecurity plan. Initiatives that do not have this initial alignment are likely to have lower chance of success and become a source of frustration for those immediately impacted. There is also an extensive exploration on hiring and motivation, with anecdotes from the field.

Moving from individuals to the broader organization, chapter 3 goes over governance models, the trade-offs and how to achieve a productive middle ground. Here is an illustration of the three security governance models discussed:

The book goes into great depths on these models, and then expands into approaches to manage related areas such as policy documents, budget allocation, and delivery pipelines.

Finally, chapter 4 talks about communication strategies, cultural change and how to measure improvement. It offers actionable examples illustrated by quotes from CISO’s who have gone through this type of corporate transformation.

Next, the book goes into the topic of risk - quantifying, measuring, and managing.

Chapter 5: Risk management

There is a quote in this chapter that illustrates the content to be covered, highlighting the importance of being prepared:

“To win one hundred victories in one hundred battles is not the acme of skill. To subdue the enemy without fighting is the acme of skill”

Sun Tzu, The Art of War

Chapter 5 begins with a mention that he will leverage ISO 31000 Risk Management framework, and Open Factor Analysis of Information Risk (FAIR), a creative commons licensed model for non-commercial applications.

The chapter goes over terminology for discussing risk, analysis of the two models and a proposed combination of both, illustrated below:

From there, the author shares ideas on how to obtain top-level sponsorship, define accountabilities, risk appetite, lightweight risk triage scenarios, and how to promote the plan across internal stakeholders. There are extensive discussions on how to identify, assess and treat risks, besides a framework for continuously monitoring and updating risks:

Control baselines are the topic of the next chapter.

Chapter 6: Establish a Control Baseline

In this chapter, the author compares a control baseline to the locks on a door deemed secure. In essence, control baselines are a way of tracking the basic controls that achieve the defined security objectives.

He mentions some control frameworks - ISO 27001, NIST 800-53 rev 4, ISACA COBIT, CIS top 20 (top 18) - which vary in scope, and once again emphasize the “rational” aspect of cybersecurity. One could have a copious amount of controls, but at the end of the day businesses are bound by time, money and resource constraints. With this clarity in mind, he moves forward with suggestions for a minimum viable control baseline, to be aligned with the business’ unique needs.

Five common challenges when defining controls are discussed:

Too many controls

Difficulty risk informing controls

Controls without a unifying architecture

Lack of structure for sharing responsibility with third parties

Controls out of line with business culture

In my opinion these are all relevant, but two stand out as particularly relevant for companies going through a cloud adoption journey:

Controls without a unifying architecture

I have seen these in a customer who had strong controls and a risk and governance plan for their on-premise workloads. Despite having these resources, they were not updated as this organization migrated to the cloud. The modernized workloads were successfully brought to the new cloud platform, but the dev team did not track the reasoning for how they were configured. The compliance team struggled to catch up, adjusting the existing controls and testing procedures to this new reality as an emergency sprint. This is an important lesson learned for starting a workload modernization project - companies should ensure participation of the security, risk and compliance team from the start of the migration, in order to avoid security debt.

In this particular case the company ran non-compliant cloud workloads for some months until the secure configurations were codified and associated tests created. A more robust approach would have been to have an early engagement from the security team and a revision of the cybersecurity governance plan to accommodate the new hybrid environment (cloud/on-premise) reality.

Lack of structure for sharing responsibility with third parties

One of the benefits of migrating workloads to the cloud is the “shared responsibility model”, a security model offered by all cloud providers. It specifies the areas that are the responsibility of the provider - infrastructure and related personel, and those that are responsibility of the company - workloads built on top of cloud infrastructure. Some cloud providers such as Google Cloud even partner with insurance companies to help lower cyber risk policy costs by sharing mutually agreed upon workload data and advising on security best practices (GCP Risk Protection Program).

One interesting situation that can arise is when a company creates a Software as a Service (SaaS) product, hosted in the cloud. If their customers only leverage the product through dashboards and APIs, the security responsibility considerations between cloud/company should be straightforward.

However I have had experiences with two companies that had more sophisticated SaaS products - they hosted customer workloads (SAP and Kubernetes) in their cloud tenant. This unleashed a labyrinth of complexities, since now the companies had to decide how much of the underlying platform to expose to their customers, how to ensure secure multi-tenant isolation, how to display logs. This was further complicated by the Kubernetes SaaS also requiring PCI compliance. The first one cancelled the project not because of technical or security reasons, but due to business misalignment, and the second was still ongoing the last time I checked 🙂.

The remainder of Chapter 6 presents 20 proposed security controls by domain, and discussions on how to implement them.

Going back to the topic of “rationalization”, it is critical to ensure that all the efforts discussed so far are achievable and aligned with the reality of the business. This is the focus of chapter 7.

Chapter 7: IT and security simplification

This chapter starts with a strong sentence:

“What you cannot manage, you cannot secure, and a control baseline can’t be fully or efficiently implemented across a chaotic IT environment.”

The chapter goes on by sharing anecdotes from the field, and makes the case for consolidating vendors and platforms to simplify visibility and management of resources.

It also cites risks of security/business misalignment, such as the emergence of Shadow IT to bypass perceived inefficiencies and blockers, and suggestions to have the IT evolve from being a provider to being a broker. This is particularly relevant for software developers and cloud infrastructure users such as data scientists and other specialists, and follows the “shifting left on security” mentality. “Shifting left” means ensuring that security considerations are in place from the onset of development, leveraging secure defaults, automation and immutability.

This is a very broad topic - a discussion about cloud governance, automation and enabling secure self service of resources can be found in the Cloud Governance Model blog post.

From simplifying security, we go to Access Control next.

Chapter 8: Access Control

The title of this chapter is actually “Control Access with Minimal Drag on the Business”. It is a great highlight for one of the biggest challenges for implementing Access Control in a corporate environment - balancing protecting sensitive resources and monitoring utilization, while keeping the systems usable and low friction to operators.

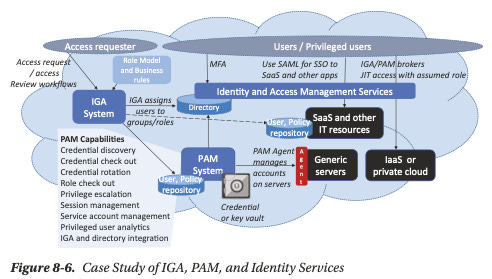

The author presents ideas around how to build IAM capabilities addressing modern workforces expectations of using “consumer-grade” devices to work, multiple strategies, and a discussion of Privileges Account Management (PAM) and Identity Governance and Administration (IGA), mentioning traditional tools and even presenting a diagram for PAM + IGA solution with the caveat that “only a few vendors yet combine both”. Here is the diagram:

In my opinion the author makes solid points, however there is an opportunity to go a bit further, beyond what is presented in the book, and explore some modern solutions.

To start, at least for modern workloads, companies should consider reducing human interaction and access to sensitive resources. Accessing a secret or sensitive information directly shouldn’t be done by a user account, it should only be done by service accounts - those associated with an application or microservice. The results of such access can then be exposed to human users through dashboards and APIs.

In cases where human access is truly needed and has to be constrained and monitored, such as in the PAM use case above, modern solutions such as Hashicorp Boundary and StrongDM implement an architecture very similar to the PAM + IGA diagram. Hashicorp Boundary can plug into multiple identity platforms where users have their identity, can be integrated with Vault to manage permissions and allows specifying which resources can be available to that user in a monitored setting, all while leveraging a scaleable and modular architecture build for distributed environments.

Another solution to address modern Access Controls is to leverage temporary credentials, particularly for cloud providers. AWS, Azure and GCP have CLI’s that allow logging command, temporary allowing API access from a terminal. AWS Cognito, Azure AD and Google Identity Platform also provide identity solutions for specific use cases in the respective platforms. Cloud agnostic approaches to temporary credentials can be found in tools such as Hashicorp Vault, which can dynamically create credentials for cloud providers, databases and other services, and the recent Terraform Dynamic Provider Credentials available in Terraform cloud. Note that leveraging dynamic credentials in Terraform was already possible with the Terraform Vault provider, what this Terraform Cloud feature offers is a way of authenticating to external providers through OIDC tokens, removing the need to hardcode credentials or rely on platform specific service accounts associated with the VM/container running the CICD tool/Terraform.

Regarding service-to-service authentication, a Zero Trust approach is considered best practice in the industry. In it, services can only communicate with one another based on explicit permissions, with every communication being denied otherwise. An example would be a web commerce application composed of three microservices: inventory, shopping cart, and payments. In this case, inventory should be able to interact with shopping cart, and vice versa, but not with payments. Only shopping cart should be able to interact with payments. This reduces the surface of attack, so if the inventory microservice is compromised, the damage that can be done is limited. It also helps reduce compliance scope for standards such as PCI DSS. Zero Trust is usually implemented with a category of tools dubbed service mesh, two examples are Istio and Consul Service Mesh.

Finally, for Data Science practitioners, tools such as Looker, Tableau and Microsoft PowerBI offer multiple IAM controls for their platforms. Looker in particular has an interesting modular architecture, focusing on the user presentation layer while allowing Big Query in the backend. This helps address scalability, data provenance and data exfiltration concerns.

We are almost at the end of the book. The next chapter goes over ensuring business continuity through a robust cyber resilience strategy.

Chapter 9: Cyber resilience

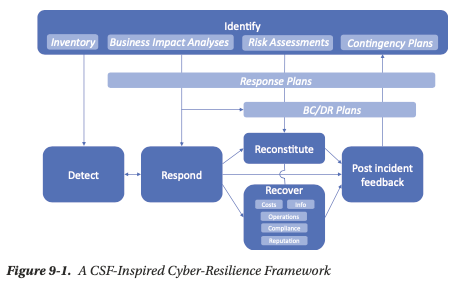

Cyber resilience is the ability of an organization to continue doing business regardless of a cyber attack. The author highlights Incident Response (IR) as a function of cyber resilience, and makes the distinction that strictly operational incidents such as hardware failures fall in the purview of business continuity function.

In this chapter, he shares NIST Cybersecurity Framework:

Throughout the chapter, he details the elements of “Identify”, “Protect”, “Detect”, “Respond”, and the steps to develop a cyber resilience response plan.

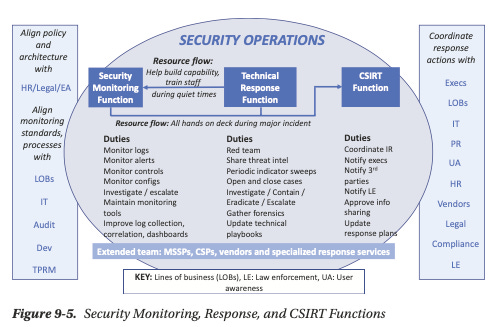

In this chapter he also covers the different roles in an Incident Response Program, presenting this framework:

Finally, he ends the chapter with a detailed suggestion for how to recover from incidents caused by Cyberattacks and operational outages, and dealing with crisis response. I highly recommend a deeper look into chapter 9, since this is a critical area that is often overlooked by companies.

To conclude the book, chapter 10 provides guidelines for how to create a “Rational Cybersecurity Success Plan”

Chapter 10: Making it Actionable

This chapter serves as an actionable review for the book. The author goes through each chapter, and presents questions to be asked when assessing the different focus areas of the plan and possible directions to take them.

He ends the book with a parting thought:

Don’t try to boil the ocean with your first Rational Cybersecurity Success Plan; make it an iterative process… “Baby steps takes us up Mount Everest”.

What about you, did you find this book useful? Do you disagree with how the author approached certain topics? Do you have similar stories to share? Comment below!